By: Loretta Kyei

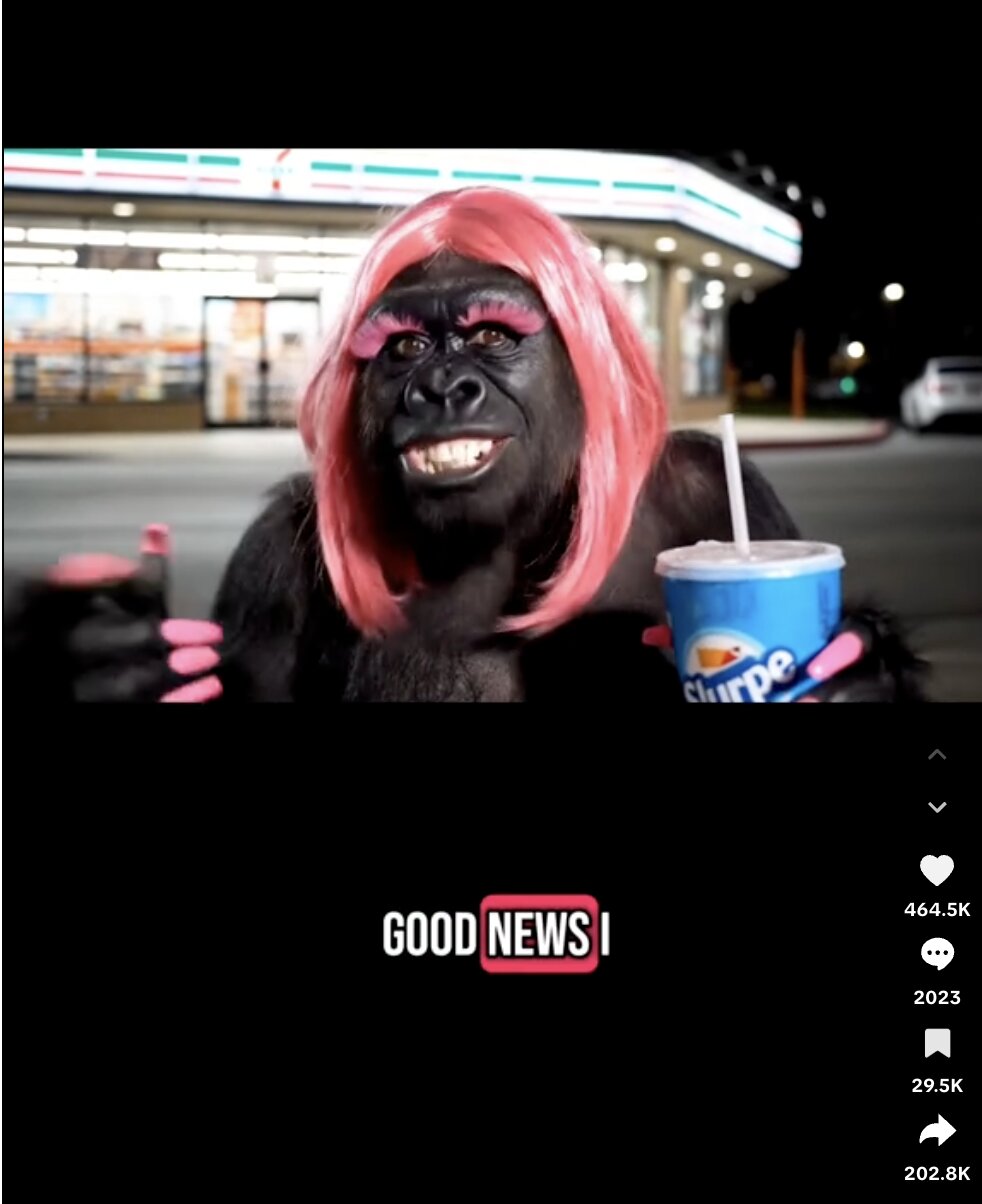

TikTok has been increasingly inundated with a new wave of “AI Slop” that weaponizes high-fidelity (meaning sharp and realistic) video generation to target and dehumanize marginalized communities.[1] Since the release of high-fidelity tools like Google’s Veo 3 and OpenAI’s Sora 2, bad actors have leveraged photorealistic text-to-video capabilities to generate sophisticated racist content that often bypasses traditional moderation filters.[2] For example, Media Matters flagged a clip titled “Average Waffle House in Atlanta.”[3] The clip depicted a restaurant filled with AI-generated monkeys holding buckets of fried chicken and watermelon, a video explicitly designed to revive minstrel caricatures.[4] These videos are also being used to manufacture political resentment and social division through engineered “outrage” content.[5]

In the days leading up to the suspension of federal Supplemental Nutrition Assistance Program (SNAP) benefits, several AI-generated videos of Black women went viral, designed to reinforce damaging stereotypes.[6] One such video portrayed a Black woman in a house full of crying infants, screaming about the loss of her EBT benefits and claiming it was the taxpayer’s responsibility to support her and her seven children.[7] Other videos depicted similar AI-generated Black women shouting in public after being unable to use food stamps.[8] These fake videos received millions of views and potentially contributed to misconceptions about who uses SNAP and deepened longstanding harmful racial stereotypes.[9]

These AI-generated videos brough to light antiquated myths that people who receive benefits from SNAP and other government assistance are taking advantage of these programs and choosing not to work.[10] In reality, SNAP provides food assistance benefits to approximately 42 million Americans, with white people making up the largest share of beneficiaries, and many either working or actively seeking employment.[11] Furthermore, SNAP fraud remains exceedingly rare, as data indicates that more than 98% of beneficiaries are fully eligible for the program.[12] Despite this, the deceptive nature of this AI-generated content even led major outlets like Fox News to broadcast these videos as authentic reactions to the Trump administration’s decision to withhold payments during the government shutdown.[13]

The impact of these AI-generated videos extends far beyond TikTok and other social media platforms as they cement pervasive stereotypes that subconsciously warp public perception of Black women.[14] These videos fuel misogynoir, a specific and compounded form of gendered racism that targets Black women uniquely.[15] This digital propaganda specifically weaponizes the “angry Black women” and “strong Black woman” archetypes, tropes that have been used to justify the dismissal of Black women’s emotions or the neglect of their physical and mental well-being.[16]

Moreover, while companies like Google and OpenAI publicly commit to blocking harmful prompts, the viral presence of these videos suggests that current safety guardrails are fundamentally flawed.[17] This failure carries a dual human cost: the systemic dehumanization of minority populations through the distribution of digital caricatures, and the severe psychological trauma inflicted on the invisible workforce tasked with cleaning up the mess. In countries like Kenya, data workers earning as little as $1.32 to $2 per hour are forced to review thousands of hours of vitriolic content to train these AI models, essentially serving as a human firewall for multibillion-dollar corporations.[18]

In May 2025, Arizona passed SB 1462, amending A.R.S. § 13-1425 to modernize the state’s laws against the unlawful disclosure of images.[19] Crucially, the legislation expanded the definition of an “image” to include “computer generated pictorial representations.”[20] Under this statute, disclosure is deemed criminal if it is performed with the intent to cause physical, financial, or serious emotional distress to the depicted individual.[21] While this and similar laws passed since 2019 primarily target AI-generated “revenge porn,”[22] there is a compelling argument for expanding these protections to cover AI-generated racist content. The unique psychological and systemic harms of AI-generated bigotry necessitate a legal framework that treats digital character assassination with the same gravity as sexual exploitation.

A significant hurdle to this solution is that many “AI Slop” videos depict composite or non-existent personas rather than specific individuals, potentially placing them outside the reach of current defamation or privacy laws. However, establishing strict criminal penalties for AI-generated racist content that does target real people—such as the widely condemned video shared by President Donald Trump depicting President Barack Obama and First Lady Michelle Obama as apes[23]—would set a vital precedent. By codifying these acts as criminal rather than merely “violative of terms of service,” the law would compel tech companies to prioritize more rigorous moderation. Without such intervention, marginalized communities, particularly Black women, will continue to bear the brunt of a digital ecosystem that allows their likenesses and identities to be weaponized for profit and mockery.

[1] Dan Milmo, Anti-immigrant material among AI-generated content getting billions of views on TikTok, The Guardian(Dec. 3 2025, 1:00 PM EST),https://www.theguardian.com/ [https://perma.cc/65MA-Q2WX].

[2] Abbie Richards, Racist AI-generated videos are the newest slop garnering millions of views on TikTok, Media Matters For America(Jul. 1, 2025, 11:47 AM EDT), https://www.mediamatters.org/ [https://perma.cc/U8CJ-5FHS].

[3] Waffle House video has over 620,000 views, Media Matters For America, https://www.mediamatters.org/ [https://perma.cc/9D57-GLJ8].

[4] Richards supra note 2.

[5] Steven Lee Myers & Stuart A. Thompson, A.I. Videos Have Flooded Social Media. No One Was Ready, New York Times (Dec. 8, 2025), https://www.nytimes.com/2025/12/08/technology/ai-slop-sora-social-media.html.

[6] Janice Gassam Asare, How Anti-Black AI Videos Harm Black Women At Work, Forbes (Nov. 7, 2025, 2:01 PM EST), https://www.forbes.com/sites/janicegassam/2025/11/07/how-anti-black-ai-videos-harm-black-women-at-work/.

[7] Id.

[8] Id.

[9] Irving Washington, Hagere Yilma, & Joel Luther, Fake AI-Generated Videos Perpetuate Sterotypes About SNAP Recipients, And New KFF Poll Looks at Belief in the False Claim That Undocumented Immigrants Are Eligible for ACA Coverage, KFF (Nov. 24, 2025), https://www.kff.org/ [https://perma.cc/6BVX-B9RW].

[10] ‘Welfare Queen’ Becomes Issue in Reagan Campaign, New York Times (Feb. 15, 1976), https://www.nytimes.com/1976/02/15/archives/welfare-queen-becomes-issue-in-reagan-campaign-hitting-a-nerve-now.html.

[11] Mia Monkovic & Ben Ward, Characteristics of Supplemental Nutrition Assistance Program Households: Fiscal Year 2023, United States Department of Agriculture (Apr. 2025), https://fns-prod.azureedge.us/ [https://perma.cc/4ZAL-2Q7Z].

[12] USDA Efforts to Reduce Waste, Abuse in the Supplemental Nutrition Assistance Program (SNAP), United States Department of Agriculture, https://www.fns.usda.gov/ [https://perma.cc/CS78-WN6X].

[13] Now AI fakes are fooling news outlets – and maybe AI pros?, Business Insider, https://www.businessinsider.com/ [https://perma.cc/RAK2-C42G].

[14] Asare, supra note 5.

[15] Janice Gassam Asare, Misogynoir: The Unique Discrimination That Black Women Face, Forbes, https://www.forbes.com/sites/janicegassam/2020/09/22/misogynoir-the-unique-discrimination-that-black-women-face/.

[16] Asare, supra note 5.

[17] Dara Kerr, OpenAI launch of video app Sora plagued by violent and racist images: ‘The guardrails are not real’, The Guardian (Oct. 4, 2025, 9:00 EDT), https://www.theguardian.com/ [https://perma.cc/7SKT-KHNQ].

[18] Andrea Marks, Bestiality And Beyond: ChatGPT Works Because Underpaid Workers Read About Horrible Things, Rolling Stone (Jan. 18, 2023), https://www.rollingstone.com/culture/culture-news/chatgtp-moderators-labeling-violent-content-ptsd-1234662975/.

[19] 2025 Ariz. Sess. Laws 1462.

[20] Id.

[21] Id.

[22] Deceptive Audio or Visual Media (‘Deepfakes’) 2024 Legislation, National Conference of State Legislatures (Nov. 22, 2024), https://www.ncsl.org/technology-and-communication/deceptive-audio-or-visual-media-deepfakes-2024-legislation.

[23] Chandelis Duster, Obama responds to Trump sharing racist AI video depicting him as an ape, NPR (Feb. 15, 2026, 1:45 PM ET), https://www.npr.org/ [https://perma.cc/7AGA-R9BM].